Wednesday, November 21, 2012

Wednesday, November 7, 2012

When truths don't commute. Inconsistent histories.

A short introduction to Consistent Histories after some trivial appetizer

When the uncertainty principle is being presented, people usually – if not always – talk about the position and the momentum or analogous dimensionful quantities. That leads most people to either ignore the principle completely or think that it describes just some technicality about the accuracy of apparatuses.

However, most people don't change their idea what the information is and how it behaves. They believe that there exists some sharp objective information, after all. Nevertheless, these ideas are incompatible with the uncertainty principle. Let me explain why the uncertainty principle applies to the truth, too.

Every proposition we can make about objects in Nature and their properties may be determined by a measurement and mathematically summarized as a Hermitian projection operator \(P\),\[

P = P^\dagger, \quad P^2=P.

\] The first condition is the hermiticity condition; the second one is the "idempotence" condition (Latin word for "the same [as its] powers") that defines the projection operators. The second condition implies that eigenvalues have to obey the same identity, \(p^2=p\), which means that the eigenvalues have to be \(0\) or \(1\). We will identify \(0\) with "truth" and "true" while \(1\) will be identified with "lie" and "false".

In some sense, you could say that \(P^2=P\) is more fundamental and \(p\in\{0,1\}\) is derived. The very claim that there are two truth values, "true" and "false", may be viewed as a derived fact in quantum mechanics, a result of a calculation. This is a toy model of the fact that many seemingly trivial facts result from calculations in quantum mechanics and some of these facts are only approximately true under the everyday circumstances and they are untrue at the fundamental level.

The first condition, hermiticity, implies that eigenstates of \(P\) associated with eigenvalue "false" (\(0\)) are orthogonal to those with the eigenvalue "true" (\(1\)). This is what allows us to say that the probability that the state disobeys a condition if it obeys the condition is 0 percent, and vice versa. They are mutually excluding. The proof of orthogonality is\[

\eq{

\braket{\text{yes-state}}{\text{no-state}} &= \bra{\text{yes-state}} P^\dagger \ket{\text{no-state}} =\\

&= \bra{\text{yes-state}} P \ket{\text{no-state}} = 0,\\

}

\] In the first step, I used the freedom to insert \(P^\dagger\) in between the states because when it acts on the eigenstate bra yes-state, it yields \(1\) times this state because this state is an eigenvalue-one eigenstate (I used the Hermitian conjugate of the usual eigenvalue equation). In the second step, I erased the dagger which is OK because of the hermiticity. In the final step, I acted with \(P\) on the no-state ket vector to get zero – because the no-state is an eigenvalue-zero eigenstate. So I got zero. The opposite-order inner product is also zero because it's the complex conjugate number (or you may prove it by a proof that is mirror to the proof above).

Let me just give you examples of projection operators corresponding to different propositions. For example, the statement "\(x\) of a particle belongs to the interval \((a,b)\)" is represented by the projection operator\[

P_{a\lt x\lt b} = \int_a^b \dd x\,\ket x\bra x.

\] It's keeping the position-eigenstate components of any vector \(\ket\psi\) that belong to the interval and erases all others. Now, the proposition that the "electron's spin relatively to the \(z\)-axis is equal to \(\hbar/2\) i.e. up" is represented by the projection operator\[

P_{z,\,\rm up} = \frac 12+ \frac{J_z}{\hbar}.

\] It's a simple linear function that moves the values \(J_z=\mp \hbar/2\) to \(0\) and \(1\), respectively. I hope you are able to write down the projection operator for a similar "up" (or "right") statement relatively to the \(x\)-axis:\[

P_{x,\,\rm up} = \frac 12+ \frac{J_x}{\hbar}.

\] Now, is the electron's spin "up" relatively to the \(z\)-axis? Is it "up" relatively to the \(x\)-axis? Those are perfectly meaningful questions that may be answered by a measurement. Because the truth value is either "false" or "true", we may obtain classical bits of information by a measurement.

However, my point is that the truth values of "\(z\) up" and "\(x\) up" propositions can't be sharply well-defined at the same moment. Indeed, it's because the commutator of the two projection operators is nonzero:\[

[ P_{z,\,\rm up}, P_{x,\,\rm up}] = \frac{iJ_y}{\hbar} \in\{-\frac i2,+\frac i2\}.

\] The commutator of the two projection operators – that just represent the numbers \(0\) and \(1\) when the corresponding propositions about the spin is false or true, respectively – is equal to a multiple of \(J_y\) and the eigenvalues (i.e. possible values) of this multiple are \(\pm i/2\). Because zero isn't among the eigenvalues of the commutators in this case, there exists no vector \(\ket\psi\) for which \[

\nexists \ket\psi:\quad (P_z P_x-P_x P_z)\ket \psi = 0.

\] Yes, I started to omit "up" in the subscripts. This non-existence means that it can't possibly happen that \(P_x,P_z\) would simultaneously have some values from the set \(\{0,1\}\). While we may rigorously prove the logical statement that the only possible values of these (and other) projection operators are zero or one, we may also rigorously prove the statement that a physical system can't have a well-defined sharp answer to both questions, "\(z\) up" and "\(x\) up".

If we have an appropriate apparatus, we can immediately answer the question whether the spin was "up" relatively to a given axis. So the questions associated with the projection operators \(P_x\) and \(P_z\) are totally physical and operationally meaningful. Also, by rotational symmetry, both of them are clearly equally meaningful. Nevertheless, they can't simultaneously have sharp truth values!

These projection operators represent potential truths that don't commute with each other. If you talk about the truth values of \(P_{x}\), your logic is incompatible with the logic of another person who assigns a classical truth value to \(P_z\). It's just not possible for both propositions to be "certainly true". It's not possible for both of them to be "certainly false", either. However, it's also impossible for one of them to be "true" and the other to be "false". ;-) They just can't have classical truth values at the same moment!

Because some people often like to repeat Wheeler's notion that the information is more fundamental in physics – and yes, no, it doesn't really mean much although I sometimes philosophically agree with such a priority – they usually think that such vacuous clichés may protect the classical world for them because the information is surely behaving classically. But it isn't. Quantum mechanics says that the information is counted in quantum bits or qubits (the electron's spin above is mathematically isomorphic to any qubit in quantum mechanics) and the Yes/No answers to most pairs of questions don't commute with one another which means that they can't be simultaneously assigned truth (eigen)values for a given situation.

This was just a trivial introduction. We will use it by realizing that "consistent histories" that would mix "different logics", i.e. statements about \(J_z\) and \(J_x\) at the same moment, are clearly forbidden. We will see formulae that prohibit them, too.

Consistent Histories

Fine. We may finally start to talk about the Consistent Histories interpretation of quantum mechanics. Wikipedia and other sources start by screaming lots of nonsense that it's surely an attempt to debunk the Copenhagen interpretation. Such misconceptions have occurred because virtually all the people talking about "interpretations" are activists and imbeciles who have promoted the fight against quantum mechanics as defined by Bohr and Heisenberg to their life mission. Some of them say such silly things because they don't historically know what Bohr and Heisenberg were actually saying. Most of them are saying such silly things because they refuse to understand basic things about modern physics. Many people belong to both sets.

At any rate, once some of them start to understand what the Consistent Histories interpretation says, they realize that it's not the "weapon of mass destruction" used against the Danish capital that they were dreaming about. In some sense, the Consistent Histories interpretation is a homework exercise:

I should get into some formulae. Readers are recommended to read e.g. this simple and pioneering 1992 text by Gell-Mann and Hartle. They use the Heisenberg picture and it makes the formulae simple. I agree with them it's more natural to use the Heisenberg picture, especially in such discussions (but also in other contexts), but because the people who tend to misunderstand the foundations of quantum mechanics almost universally prefer the Schrödinger picture, I will translate the Consistent Histories wisdom into the picture of the guy who didn't quite respect complementarity or uncertainty (either \(X\) or \(P\)) and who lived both with his wife and with his mistress. :-)

(Well, if you want to hear some defense, he's had children with three mistresses in total and he justified the relations by saying that he "sexually detested his wife Anny".)

It's not so hard to summarize the definition of "weakly consistent" and "medium consistent" histories. What is a history? In the picture we use, the operators are constant in time and the states evolve according to Schrödinger's equation. I will assume that the Hamiltonian is time-independent and the unitary evolution operators from time \(t_1\) through later time \(t_2\) will be denoted \[

U_{t_2,t_1} = \exp(H\frac{t_2-t_1}{i\hbar}).

\] Let's use the value \(t=0\) for the initial state and the time \(t=T\) with some \(T\gt 0\) for the "end of the history". From the beginning through the end, an initial pure state evolves as\[

\ket{\psi}_{\rm initial} \to \ket{\psi}_{\rm final} = U_{T,0} \ket{\psi}_{\rm initial}.

\] Because the density matrix is a combination of ket-bra products\[

\rho = \sum_i p_i \ket{\psi_i}\bra{\psi_i},

\] we may also immediately write down the evolution for the (initial) density matrix:\[

\rho\to U_{T,0}\rho U^\dagger_{T,0}.

\] The daggered evolution operator at the end appeared because of the bra-vectors in the density matrix: they also evolve.

Now, the operator of a history will be the operator \(U_{T,0}\) with some extra, a priori arbitrary, projection operators inserted between the evolution over different intervals into which \((0,T)\) will be divided. We will search for "collections of coarse-grained histories". In the collection, individual elements i.e. histories will be labeled by the Greek letters such as \(\alpha\). Mathematically, the value of \(\alpha\) will store all the information about the moments at which we inserted projection operators as well as the information which projection operators.\[

\alpha\leftrightarrow \{ n_\alpha, \{t_{\alpha,1},t_{\alpha,2},\dots t_{\alpha,n_{\alpha}}\},

\{i_{\alpha,1},i_{\alpha,2},\dots i_{\alpha,n_{\alpha}}\}

\}

\] where \(i_\alpha\) are subscripts distinguishing all possible projection operators \(P_{i_\alpha}\) that we use in any history in the collection. Here, \(n_\alpha\) is the number of projection operators we are inserting in the \(\alpha\)-th alternative history, the labels \(t_j\) specify the value of time \(t\) where we are inserting the projection operators, and \(i_j\) say which projection operators we insert at the \(j\)-th insertion.

You may see that the history operator \(C_\alpha\) will be a generalization of \(U_{T,0}\) of this sort:\[

{\Large

\eq{

C_\alpha &= U_{T,t_{\alpha,n_\alpha}} P_{i_{\alpha,n_\alpha}}\cdot\\

&\cdot U_{t_{\alpha,n_\alpha},t_{\alpha,n_\alpha-1}} P_{i_{\alpha,n_\alpha-1}}\cdot

\\

&\quad \cdots\\

&\cdot U_{t_{\alpha,2},t_{\alpha,1}} P_{i_{\alpha,1}}\cdot\\

&\cdot U_{t_{\alpha,1},0}.

}

}

\] I increased the font size because of the nested subscripts and wrote it on many lines. But the operator is exactly what you expect. You cut \(U_{T,0}\) into \(n_\alpha+1\) evolution operators over intervals and insert the appropriate projection operators to the \(n_\alpha\) places. The insertions and evolution operators at the "earlier times" appear on the right side from their friends linked to "later times"; the usual time ordering holds because the operators on the right are the first ones that act on the initial ket state.

It was a messy formula and I won't write it again. (My formula in Schrödinger's picture differs by the usual transformations by evolution operators, times some possible additional evolution operator, from the Heisenberg-picture formulae in the paper by Gell-Mann and Hartle.)

How can you interpret the history operator? Well, it's like the evolution with \(n_\alpha\) "collapses" in between. However, instead of a discontinuous step in the evolution at which Schrödinger's equation ceases to hold (this totally wrong description occurs at many places, including the newest book by Brian Greene), you should interpret the inserted projection operators differently. They're insertions that are needed to calculate the probability that the history \(\alpha\) will be realized.

This interpretation is needed because the separation into the histories from the particular set is surely not unique, and therefore can't be objective. You may always make the splitting to the histories "less finely grained" and the formalism will calculate the probabilities of these "less finely grained" histories, too. It is clearly up to you – within some limitations – how fine and accurate questions you ask about the evolution which is why you surely can't consider the insertions of the projection operators to be "objective collapses".

Now, how do we calculate the probability that the particular history will take place? It's simple if we assume a pure initial state \(\ket\psi\). What happens with the state? Well, it evolves by the evolution operators \(U_{t_{j+1},t_j}\) over the intervals and at the critical points, the pure state is projected by the projection operators. It is kept non-normalized so we pick the multiplicative factor of the complex probability amplitude associated with the projection operator. We do so for every projection operator in the history so that gives us the product of the complex probability amplitudes associated with all measurements. Finally, we must square the absolute value of this product to get the probability out of the total amplitude.

If you think about the action of \(C_\alpha\) on the initial state as well as the usual Born rule to calculate the probabilities of various measurements (plus the product formula for probabilities of composite statements), you will realize that the probability of the history \(\alpha\) which I will write as \(D(\alpha,\alpha)\) is given by\[

D(\alpha,\alpha) = \bra{\psi} C_\alpha^\dagger\cdot C_\alpha \ket\psi.

\] The first, bra-daggered part of the product, is needed because we calculate the probabilities from the squared absolute values of the complex probability amplitudes that we picked from the projection operators. By the cyclic property of the trace, that can be rewritten as\[

D(\alpha,\alpha) = {\rm Tr}\zav{ C_\alpha \ket\psi

\bra{\psi} C_\alpha^\dagger}.

\] We may easily generalize this formula to a mixed state which is just some combination of \(\ket\psi\bra\psi\) objects. By linearity, we get:\[

D(\alpha,\alpha) = {\rm Tr}\zav{ C_\alpha \rho C_\alpha^\dagger}.

\] Here, \(\rho\) is the initial state at \(t=0\). So the Consistent Histories interpretation allows us to pick a collection of histories and calculate the probability of each history in the collection by the formula above. Finally, I must say what it means for the histories to be "consistent".

Well, if we "merge" two nearby (or any two) histories \(\alpha\) and \(\beta\), we get a less fine history called "\(\alpha\) or \(\beta\)". I have assumed that all the histories in the set are mutually exclusive and the total probability is guaranteed to be one. The probability of "\(\alpha\) or \(\beta\)" must be equal to the sum of probabilities, \(D(\alpha,\alpha)+D(\beta,\beta)\), but even this "\(\alpha\) or \(\beta\)" thing is a history so its probability must be given by the same formula for \(D\), one involving the history operator\[

C_{\alpha\text{ or }\beta} = C_\alpha + C_\beta.

\] Because \(D(\gamma,\gamma)\) is bilinear in \(C_\gamma\) and/or its Hermitian conjugate, the addition formula needs the mixed \(\alpha\)-\(\beta\) terms to cancel. The additivity of the probabilities therefore requires\[

{\rm Re}\,D(\alpha,\beta) = 0.

\] The imaginary part doesn't have to be zero because it cancels against its complex conjugate term. The condition above, required for all pairs \(\forall \alpha,\beta\) in the collection of histories, is known as "weak consistency" (originally "weak decoherence") condition.

Now, it's very unnatural to require that just the real part of the off-diagonal entries \(D(\alpha,\beta)\) for the histories' probability vanishes. The reason is that the phase of \(C_\alpha\) is really a matter of conventions and in realistic situations, the phases of \(C_\alpha\) and \(C_\beta\) may even change independently, almost immediately. So instead of the "weak consistency" condition, it is more sensible to demand the "medium consistency" condition\[

\forall \alpha\neq \beta:\quad D(\alpha,\beta) = 0.

\] The matrix of probabilities for the histories, \(D(\alpha,\beta)\), must simply be diagonal and the diagonal entries calculate the probability of each history for us. It's that simple.

Any collection of alternative histories satisfying the medium consistency condition may be "asked" and quantum mechanics gives us the "answers" while all the identities for the probabilities of composite propositions such as "\(\alpha\) or \(\beta\)" will hold as expected. So one will be able to use "classical reasoning" or "common sense" for the answers to all these questions.

It's important to realize that the job for quantum mechanics isn't to "calculate the right questions" or the "right collection of alternative histories" for us. There is no canonical choice. To say the least, there's clearly no preferred "degree of fine or coarse graining" we should adopt. Too coarse graining will be telling us too little; too fine graining will lead us to a conflict with the consistency condition – this conflict really has the origin in the uncertainty principle. You simply can't expect too many things to be specified too sharply. If you tried to fine-grain the histories "absolutely finely", the histories would resemble the classical histories summed in Feynman's approach to quantum mechanics. But they're clearly not consistent. In particular, we know that they can't be mutually excluding because even in the classical limit, many histories in the vicinity of the classical solution contribute to the evolution, as Feynman taught us. This fact also manifests itself by nonzero diagonal entries between histories that are too close to each other (e.g. because the projection operators on states or "cells in the phase space" are clearly not mutually exclusive if the two cells overlap).

The right attitude is somewhere in between – collections of coarse-grained histories for which the consistency condition holds accurately enough, i.e. histories that obey the uncertainty principle etc. sufficiently satisfactorily, but also histories that are fine enough for us to be satisfied with the precision we need. The precise location of the "compromise" clearly cannot be objectively codified. To choose how accurately we want to distinguish histories is clearly a subjective choice. It's up to the observer.

It should be obvious to the reader that there can't exist any "only right degree of coarse-graining". So there can't exist any "only right set of consistent histories". The choice of the right questions, alternative answers, and the degree of accuracy is up to the observer who chooses the logic. It is inevitably subjective and non-unique. The projection operators don't represent any "objective collapse". Instead, the way how they're inserted encodes the question that an observer asked – and I have written down the explicit formula for the answer, namely the probability of a given history, too.

All physically meaningful questions may be summarized as the questions about the probabilities of different alternative histories in a consistent collection, given a known initial state encoded in a density matrix. If you find several collections of consistent histories, good for you. You may perhaps succeed even if there won't be any "unifying finely grained collection" that would allow you to fully answer all the questions from the two collections. The collections may perhaps look at the physical system from a totally different angle. But if they're consistent, they're allowed.

This is clearly a complete and consistent interpretation of quantum mechanics. It tells you exactly what you may ask and what you're not allowed to ask, and for the things you may ask, it tells you how to calculate the answers. They agree with the experiments. All the criticism of this interpretation is clearly pure idiocy and bigotry.

Let me just mention two representative examples of histories that are not consistent.

Start with Schrödinger's cat described by the density matrix \(\rho\). Let the killing device evolve. At the end, try to define two histories that project the cat to some random macroscopic superpositions of the "common sense" dead and alive stated such as\[

0.6\ket{\rm dead}+0.8i\ket{\rm alive},\quad 0.8i\ket{\rm dead}+0.6\ket{\rm alive}.

\] The functional \(D(1,2)\) will be nonzero because the matrix of probability – the final density matrix after decoherence – is off-diagonal in this "uncommon sense" basis.

In principle, you could think that if the probability of "dead" and "alive" will be exactly equal, the matrix \(D\) will be a multiple of the identity matrix – and the identity matrix has the same form in all bases, including bases of unnatural superpositions. In principle, it's right and you have the freedom to rotate the bases arbitrarily.

In practice, you can't rotate them because the evolution of the cat will be producing and affecting lots of environmental degrees of freedom. If you choose a slightly more fine-grained history for the "dead portion" of the evolution than for the "alive portion", or vice versa, the relevant part of \(D(\alpha,\beta)\) will cease to be a multiple of the identity matrix: the entries on the diagonal of \(D\) will be divided to smaller pieces in the "dead cat branch" of the matrix. Because you want your calculation to be independent of the precise level of coarse-graining or the number of degrees of freedom that you treat as the environment, even in the special case when some of the diagonal entries of \(D\) are exactly equal, you won't really be allowed to rotate the basis while preserving the consistency condition "robustly".

Conventional low-energy situations won't really allow you "qualitatively different choices of the collection of consistent histories" that wouldn't be just some "coarse-graining of quantum possibilities around some classical histories". However, the black hole complementarity actually represents a great example of non-uniqueness of the solution to the condition of consistency of histories. The infalling and outgoing observer are using qualitatively different consistent collections of history operators acting on the same (or overlapping) Hilbert space.

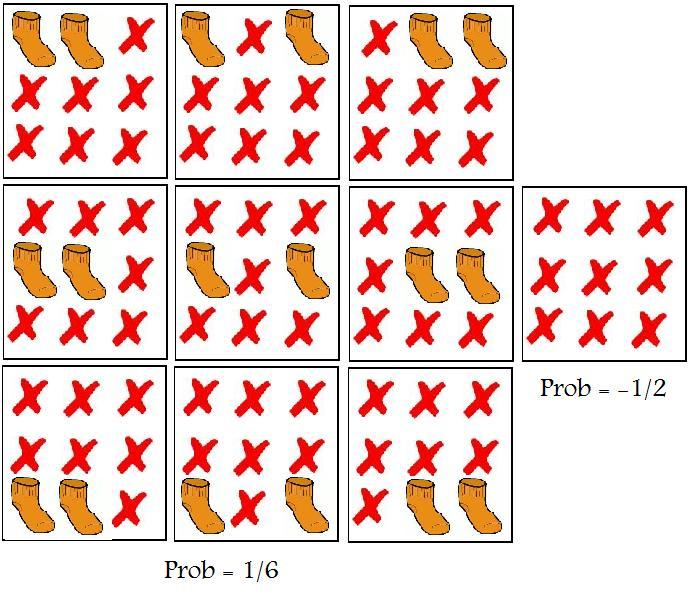

Finally, let me also mention that the consistency condition may seemingly allow you to choose "\(z\) up" and "\(x\) up" histories from the beginning of the article in the same collection. The Consistent Histories formalism simplifies dramatically if we only want to show this simple point. The evolution may be completely dropped, the history operators reduce to simple projection operators, and we essentially consider\[

D(x,z) = {\rm Tr} \zav{P_x \rho P_z}.

\] If you write \(\rho\) as a combination of the identity matrix and three Pauli matrices (or, equivalently, multiples of \(P_x,P_y,P_z\)), you will find out that the trace above vanishes as long as \(\rho\) contains no contribution from \(P_y\). So if the expectation value of \(J_y\) in the initial state vanishes, the off-diagonal elements will be zero. (The latter claim may also be easily seen by calculating \(D(x,z)-D(z,x)\) from the commutator \([P_z,P_x]\).)

However, such a collection of histories will fail to obey the logical condition I haven't mentioned yet:\[

\sum_\alpha C_\alpha = {\bf 1}.

\] This should be valid as an operator equation so it's stronger than \(\sum_\alpha D(\alpha,\alpha)=1\). So it's not allowed to consider "alternatives" that aren't really orthogonal to each other.

In practice, the equation \(D(\alpha,\beta)=0\) is never "quite accurate" so we always ask questions about alternative histories that are only approximately consistent although the accuracy quickly becomes sufficient for all practical and most of impractical purposes. That's a manifestation of the fact that classical physics – and classical reasoning in general – never kicks in quite exactly.

Let me mention that aside from the "weak decoherence" and "medium decoherence" conditions above (the medium one clearly implies the weak one), Gell-Mann and Hartle also discussed a "strong decoherence" condition which would imply both of the previous two but which is too strong and would kill almost all choices of "history collections" whenever the initial state is highly mixed. The condition said that one could express all products \(C_\alpha \rho\) as\[

C_\alpha \rho = R_\alpha \rho

\] where \(R_\alpha\) is a projection operator, a "record projection". So one wants to work with the "medium decoherent" histories.

When the uncertainty principle is being presented, people usually – if not always – talk about the position and the momentum or analogous dimensionful quantities. That leads most people to either ignore the principle completely or think that it describes just some technicality about the accuracy of apparatuses.

However, most people don't change their idea what the information is and how it behaves. They believe that there exists some sharp objective information, after all. Nevertheless, these ideas are incompatible with the uncertainty principle. Let me explain why the uncertainty principle applies to the truth, too.

Every proposition we can make about objects in Nature and their properties may be determined by a measurement and mathematically summarized as a Hermitian projection operator \(P\),\[

P = P^\dagger, \quad P^2=P.

\] The first condition is the hermiticity condition; the second one is the "idempotence" condition (Latin word for "the same [as its] powers") that defines the projection operators. The second condition implies that eigenvalues have to obey the same identity, \(p^2=p\), which means that the eigenvalues have to be \(0\) or \(1\). We will identify \(0\) with "truth" and "true" while \(1\) will be identified with "lie" and "false".

In some sense, you could say that \(P^2=P\) is more fundamental and \(p\in\{0,1\}\) is derived. The very claim that there are two truth values, "true" and "false", may be viewed as a derived fact in quantum mechanics, a result of a calculation. This is a toy model of the fact that many seemingly trivial facts result from calculations in quantum mechanics and some of these facts are only approximately true under the everyday circumstances and they are untrue at the fundamental level.

The first condition, hermiticity, implies that eigenstates of \(P\) associated with eigenvalue "false" (\(0\)) are orthogonal to those with the eigenvalue "true" (\(1\)). This is what allows us to say that the probability that the state disobeys a condition if it obeys the condition is 0 percent, and vice versa. They are mutually excluding. The proof of orthogonality is\[

\eq{

\braket{\text{yes-state}}{\text{no-state}} &= \bra{\text{yes-state}} P^\dagger \ket{\text{no-state}} =\\

&= \bra{\text{yes-state}} P \ket{\text{no-state}} = 0,\\

}

\] In the first step, I used the freedom to insert \(P^\dagger\) in between the states because when it acts on the eigenstate bra yes-state, it yields \(1\) times this state because this state is an eigenvalue-one eigenstate (I used the Hermitian conjugate of the usual eigenvalue equation). In the second step, I erased the dagger which is OK because of the hermiticity. In the final step, I acted with \(P\) on the no-state ket vector to get zero – because the no-state is an eigenvalue-zero eigenstate. So I got zero. The opposite-order inner product is also zero because it's the complex conjugate number (or you may prove it by a proof that is mirror to the proof above).

Let me just give you examples of projection operators corresponding to different propositions. For example, the statement "\(x\) of a particle belongs to the interval \((a,b)\)" is represented by the projection operator\[

P_{a\lt x\lt b} = \int_a^b \dd x\,\ket x\bra x.

\] It's keeping the position-eigenstate components of any vector \(\ket\psi\) that belong to the interval and erases all others. Now, the proposition that the "electron's spin relatively to the \(z\)-axis is equal to \(\hbar/2\) i.e. up" is represented by the projection operator\[

P_{z,\,\rm up} = \frac 12+ \frac{J_z}{\hbar}.

\] It's a simple linear function that moves the values \(J_z=\mp \hbar/2\) to \(0\) and \(1\), respectively. I hope you are able to write down the projection operator for a similar "up" (or "right") statement relatively to the \(x\)-axis:\[

P_{x,\,\rm up} = \frac 12+ \frac{J_x}{\hbar}.

\] Now, is the electron's spin "up" relatively to the \(z\)-axis? Is it "up" relatively to the \(x\)-axis? Those are perfectly meaningful questions that may be answered by a measurement. Because the truth value is either "false" or "true", we may obtain classical bits of information by a measurement.

However, my point is that the truth values of "\(z\) up" and "\(x\) up" propositions can't be sharply well-defined at the same moment. Indeed, it's because the commutator of the two projection operators is nonzero:\[

[ P_{z,\,\rm up}, P_{x,\,\rm up}] = \frac{iJ_y}{\hbar} \in\{-\frac i2,+\frac i2\}.

\] The commutator of the two projection operators – that just represent the numbers \(0\) and \(1\) when the corresponding propositions about the spin is false or true, respectively – is equal to a multiple of \(J_y\) and the eigenvalues (i.e. possible values) of this multiple are \(\pm i/2\). Because zero isn't among the eigenvalues of the commutators in this case, there exists no vector \(\ket\psi\) for which \[

\nexists \ket\psi:\quad (P_z P_x-P_x P_z)\ket \psi = 0.

\] Yes, I started to omit "up" in the subscripts. This non-existence means that it can't possibly happen that \(P_x,P_z\) would simultaneously have some values from the set \(\{0,1\}\). While we may rigorously prove the logical statement that the only possible values of these (and other) projection operators are zero or one, we may also rigorously prove the statement that a physical system can't have a well-defined sharp answer to both questions, "\(z\) up" and "\(x\) up".

If we have an appropriate apparatus, we can immediately answer the question whether the spin was "up" relatively to a given axis. So the questions associated with the projection operators \(P_x\) and \(P_z\) are totally physical and operationally meaningful. Also, by rotational symmetry, both of them are clearly equally meaningful. Nevertheless, they can't simultaneously have sharp truth values!

These projection operators represent potential truths that don't commute with each other. If you talk about the truth values of \(P_{x}\), your logic is incompatible with the logic of another person who assigns a classical truth value to \(P_z\). It's just not possible for both propositions to be "certainly true". It's not possible for both of them to be "certainly false", either. However, it's also impossible for one of them to be "true" and the other to be "false". ;-) They just can't have classical truth values at the same moment!

Because some people often like to repeat Wheeler's notion that the information is more fundamental in physics – and yes, no, it doesn't really mean much although I sometimes philosophically agree with such a priority – they usually think that such vacuous clichés may protect the classical world for them because the information is surely behaving classically. But it isn't. Quantum mechanics says that the information is counted in quantum bits or qubits (the electron's spin above is mathematically isomorphic to any qubit in quantum mechanics) and the Yes/No answers to most pairs of questions don't commute with one another which means that they can't be simultaneously assigned truth (eigen)values for a given situation.

This was just a trivial introduction. We will use it by realizing that "consistent histories" that would mix "different logics", i.e. statements about \(J_z\) and \(J_x\) at the same moment, are clearly forbidden. We will see formulae that prohibit them, too.

Consistent Histories

Fine. We may finally start to talk about the Consistent Histories interpretation of quantum mechanics. Wikipedia and other sources start by screaming lots of nonsense that it's surely an attempt to debunk the Copenhagen interpretation. Such misconceptions have occurred because virtually all the people talking about "interpretations" are activists and imbeciles who have promoted the fight against quantum mechanics as defined by Bohr and Heisenberg to their life mission. Some of them say such silly things because they don't historically know what Bohr and Heisenberg were actually saying. Most of them are saying such silly things because they refuse to understand basic things about modern physics. Many people belong to both sets.

At any rate, once some of them start to understand what the Consistent Histories interpretation says, they realize that it's not the "weapon of mass destruction" used against the Danish capital that they were dreaming about. In some sense, the Consistent Histories interpretation is a homework exercise:

Apply the Copenhagen interpretation to a collection of arbitrary sequences of measurements at various times and discuss which collections are permissible as interpretations of alternative histories. With the help of decoherence, show that your formulae clarify all issues surrounding the so-called "measurement problem" i.e. that quantum mechanics in its Copenhagen interpretation is a complete theory that produces meaningful predictions for microscopic as well as macroscopic systems.Of course, they feel utterly disappointed. The Consistent Histories approach is refusing to offer them the "classical mechanisms" and "classical information about the system" and "preferred, 'real' choices of bases and operators" and all other things they were expecting. Instead, the fathers of the Consistent Histories join Bohr and Heisenberg in announcing that quantum physics is different than any theory within the general classical framework. It doesn't assume any objective information about the reality. The probabilities are intrinsically incorporated to the foundations of the theory, just like they have always been. But there is no engine or mechanism that "produces" the probabilities in a way that could be fully described by a classical model. No surprise, the Consistent Histories interpretation was coined by mature physicists such as Murray Gell-Mann, James Hartle, Roland Omnès and Robert B. Griffiths.

I should get into some formulae. Readers are recommended to read e.g. this simple and pioneering 1992 text by Gell-Mann and Hartle. They use the Heisenberg picture and it makes the formulae simple. I agree with them it's more natural to use the Heisenberg picture, especially in such discussions (but also in other contexts), but because the people who tend to misunderstand the foundations of quantum mechanics almost universally prefer the Schrödinger picture, I will translate the Consistent Histories wisdom into the picture of the guy who didn't quite respect complementarity or uncertainty (either \(X\) or \(P\)) and who lived both with his wife and with his mistress. :-)

(Well, if you want to hear some defense, he's had children with three mistresses in total and he justified the relations by saying that he "sexually detested his wife Anny".)

It's not so hard to summarize the definition of "weakly consistent" and "medium consistent" histories. What is a history? In the picture we use, the operators are constant in time and the states evolve according to Schrödinger's equation. I will assume that the Hamiltonian is time-independent and the unitary evolution operators from time \(t_1\) through later time \(t_2\) will be denoted \[

U_{t_2,t_1} = \exp(H\frac{t_2-t_1}{i\hbar}).

\] Let's use the value \(t=0\) for the initial state and the time \(t=T\) with some \(T\gt 0\) for the "end of the history". From the beginning through the end, an initial pure state evolves as\[

\ket{\psi}_{\rm initial} \to \ket{\psi}_{\rm final} = U_{T,0} \ket{\psi}_{\rm initial}.

\] Because the density matrix is a combination of ket-bra products\[

\rho = \sum_i p_i \ket{\psi_i}\bra{\psi_i},

\] we may also immediately write down the evolution for the (initial) density matrix:\[

\rho\to U_{T,0}\rho U^\dagger_{T,0}.

\] The daggered evolution operator at the end appeared because of the bra-vectors in the density matrix: they also evolve.

Now, the operator of a history will be the operator \(U_{T,0}\) with some extra, a priori arbitrary, projection operators inserted between the evolution over different intervals into which \((0,T)\) will be divided. We will search for "collections of coarse-grained histories". In the collection, individual elements i.e. histories will be labeled by the Greek letters such as \(\alpha\). Mathematically, the value of \(\alpha\) will store all the information about the moments at which we inserted projection operators as well as the information which projection operators.\[

\alpha\leftrightarrow \{ n_\alpha, \{t_{\alpha,1},t_{\alpha,2},\dots t_{\alpha,n_{\alpha}}\},

\{i_{\alpha,1},i_{\alpha,2},\dots i_{\alpha,n_{\alpha}}\}

\}

\] where \(i_\alpha\) are subscripts distinguishing all possible projection operators \(P_{i_\alpha}\) that we use in any history in the collection. Here, \(n_\alpha\) is the number of projection operators we are inserting in the \(\alpha\)-th alternative history, the labels \(t_j\) specify the value of time \(t\) where we are inserting the projection operators, and \(i_j\) say which projection operators we insert at the \(j\)-th insertion.

You may see that the history operator \(C_\alpha\) will be a generalization of \(U_{T,0}\) of this sort:\[

{\Large

\eq{

C_\alpha &= U_{T,t_{\alpha,n_\alpha}} P_{i_{\alpha,n_\alpha}}\cdot\\

&\cdot U_{t_{\alpha,n_\alpha},t_{\alpha,n_\alpha-1}} P_{i_{\alpha,n_\alpha-1}}\cdot

\\

&\quad \cdots\\

&\cdot U_{t_{\alpha,2},t_{\alpha,1}} P_{i_{\alpha,1}}\cdot\\

&\cdot U_{t_{\alpha,1},0}.

}

}

\] I increased the font size because of the nested subscripts and wrote it on many lines. But the operator is exactly what you expect. You cut \(U_{T,0}\) into \(n_\alpha+1\) evolution operators over intervals and insert the appropriate projection operators to the \(n_\alpha\) places. The insertions and evolution operators at the "earlier times" appear on the right side from their friends linked to "later times"; the usual time ordering holds because the operators on the right are the first ones that act on the initial ket state.

It was a messy formula and I won't write it again. (My formula in Schrödinger's picture differs by the usual transformations by evolution operators, times some possible additional evolution operator, from the Heisenberg-picture formulae in the paper by Gell-Mann and Hartle.)

How can you interpret the history operator? Well, it's like the evolution with \(n_\alpha\) "collapses" in between. However, instead of a discontinuous step in the evolution at which Schrödinger's equation ceases to hold (this totally wrong description occurs at many places, including the newest book by Brian Greene), you should interpret the inserted projection operators differently. They're insertions that are needed to calculate the probability that the history \(\alpha\) will be realized.

This interpretation is needed because the separation into the histories from the particular set is surely not unique, and therefore can't be objective. You may always make the splitting to the histories "less finely grained" and the formalism will calculate the probabilities of these "less finely grained" histories, too. It is clearly up to you – within some limitations – how fine and accurate questions you ask about the evolution which is why you surely can't consider the insertions of the projection operators to be "objective collapses".

Now, how do we calculate the probability that the particular history will take place? It's simple if we assume a pure initial state \(\ket\psi\). What happens with the state? Well, it evolves by the evolution operators \(U_{t_{j+1},t_j}\) over the intervals and at the critical points, the pure state is projected by the projection operators. It is kept non-normalized so we pick the multiplicative factor of the complex probability amplitude associated with the projection operator. We do so for every projection operator in the history so that gives us the product of the complex probability amplitudes associated with all measurements. Finally, we must square the absolute value of this product to get the probability out of the total amplitude.

If you think about the action of \(C_\alpha\) on the initial state as well as the usual Born rule to calculate the probabilities of various measurements (plus the product formula for probabilities of composite statements), you will realize that the probability of the history \(\alpha\) which I will write as \(D(\alpha,\alpha)\) is given by\[

D(\alpha,\alpha) = \bra{\psi} C_\alpha^\dagger\cdot C_\alpha \ket\psi.

\] The first, bra-daggered part of the product, is needed because we calculate the probabilities from the squared absolute values of the complex probability amplitudes that we picked from the projection operators. By the cyclic property of the trace, that can be rewritten as\[

D(\alpha,\alpha) = {\rm Tr}\zav{ C_\alpha \ket\psi

\bra{\psi} C_\alpha^\dagger}.

\] We may easily generalize this formula to a mixed state which is just some combination of \(\ket\psi\bra\psi\) objects. By linearity, we get:\[

D(\alpha,\alpha) = {\rm Tr}\zav{ C_\alpha \rho C_\alpha^\dagger}.

\] Here, \(\rho\) is the initial state at \(t=0\). So the Consistent Histories interpretation allows us to pick a collection of histories and calculate the probability of each history in the collection by the formula above. Finally, I must say what it means for the histories to be "consistent".

Well, if we "merge" two nearby (or any two) histories \(\alpha\) and \(\beta\), we get a less fine history called "\(\alpha\) or \(\beta\)". I have assumed that all the histories in the set are mutually exclusive and the total probability is guaranteed to be one. The probability of "\(\alpha\) or \(\beta\)" must be equal to the sum of probabilities, \(D(\alpha,\alpha)+D(\beta,\beta)\), but even this "\(\alpha\) or \(\beta\)" thing is a history so its probability must be given by the same formula for \(D\), one involving the history operator\[

C_{\alpha\text{ or }\beta} = C_\alpha + C_\beta.

\] Because \(D(\gamma,\gamma)\) is bilinear in \(C_\gamma\) and/or its Hermitian conjugate, the addition formula needs the mixed \(\alpha\)-\(\beta\) terms to cancel. The additivity of the probabilities therefore requires\[

{\rm Re}\,D(\alpha,\beta) = 0.

\] The imaginary part doesn't have to be zero because it cancels against its complex conjugate term. The condition above, required for all pairs \(\forall \alpha,\beta\) in the collection of histories, is known as "weak consistency" (originally "weak decoherence") condition.

Now, it's very unnatural to require that just the real part of the off-diagonal entries \(D(\alpha,\beta)\) for the histories' probability vanishes. The reason is that the phase of \(C_\alpha\) is really a matter of conventions and in realistic situations, the phases of \(C_\alpha\) and \(C_\beta\) may even change independently, almost immediately. So instead of the "weak consistency" condition, it is more sensible to demand the "medium consistency" condition\[

\forall \alpha\neq \beta:\quad D(\alpha,\beta) = 0.

\] The matrix of probabilities for the histories, \(D(\alpha,\beta)\), must simply be diagonal and the diagonal entries calculate the probability of each history for us. It's that simple.

Any collection of alternative histories satisfying the medium consistency condition may be "asked" and quantum mechanics gives us the "answers" while all the identities for the probabilities of composite propositions such as "\(\alpha\) or \(\beta\)" will hold as expected. So one will be able to use "classical reasoning" or "common sense" for the answers to all these questions.

It's important to realize that the job for quantum mechanics isn't to "calculate the right questions" or the "right collection of alternative histories" for us. There is no canonical choice. To say the least, there's clearly no preferred "degree of fine or coarse graining" we should adopt. Too coarse graining will be telling us too little; too fine graining will lead us to a conflict with the consistency condition – this conflict really has the origin in the uncertainty principle. You simply can't expect too many things to be specified too sharply. If you tried to fine-grain the histories "absolutely finely", the histories would resemble the classical histories summed in Feynman's approach to quantum mechanics. But they're clearly not consistent. In particular, we know that they can't be mutually excluding because even in the classical limit, many histories in the vicinity of the classical solution contribute to the evolution, as Feynman taught us. This fact also manifests itself by nonzero diagonal entries between histories that are too close to each other (e.g. because the projection operators on states or "cells in the phase space" are clearly not mutually exclusive if the two cells overlap).

The right attitude is somewhere in between – collections of coarse-grained histories for which the consistency condition holds accurately enough, i.e. histories that obey the uncertainty principle etc. sufficiently satisfactorily, but also histories that are fine enough for us to be satisfied with the precision we need. The precise location of the "compromise" clearly cannot be objectively codified. To choose how accurately we want to distinguish histories is clearly a subjective choice. It's up to the observer.

It should be obvious to the reader that there can't exist any "only right degree of coarse-graining". So there can't exist any "only right set of consistent histories". The choice of the right questions, alternative answers, and the degree of accuracy is up to the observer who chooses the logic. It is inevitably subjective and non-unique. The projection operators don't represent any "objective collapse". Instead, the way how they're inserted encodes the question that an observer asked – and I have written down the explicit formula for the answer, namely the probability of a given history, too.

All physically meaningful questions may be summarized as the questions about the probabilities of different alternative histories in a consistent collection, given a known initial state encoded in a density matrix. If you find several collections of consistent histories, good for you. You may perhaps succeed even if there won't be any "unifying finely grained collection" that would allow you to fully answer all the questions from the two collections. The collections may perhaps look at the physical system from a totally different angle. But if they're consistent, they're allowed.

This is clearly a complete and consistent interpretation of quantum mechanics. It tells you exactly what you may ask and what you're not allowed to ask, and for the things you may ask, it tells you how to calculate the answers. They agree with the experiments. All the criticism of this interpretation is clearly pure idiocy and bigotry.

Let me just mention two representative examples of histories that are not consistent.

Start with Schrödinger's cat described by the density matrix \(\rho\). Let the killing device evolve. At the end, try to define two histories that project the cat to some random macroscopic superpositions of the "common sense" dead and alive stated such as\[

0.6\ket{\rm dead}+0.8i\ket{\rm alive},\quad 0.8i\ket{\rm dead}+0.6\ket{\rm alive}.

\] The functional \(D(1,2)\) will be nonzero because the matrix of probability – the final density matrix after decoherence – is off-diagonal in this "uncommon sense" basis.

In principle, you could think that if the probability of "dead" and "alive" will be exactly equal, the matrix \(D\) will be a multiple of the identity matrix – and the identity matrix has the same form in all bases, including bases of unnatural superpositions. In principle, it's right and you have the freedom to rotate the bases arbitrarily.

In practice, you can't rotate them because the evolution of the cat will be producing and affecting lots of environmental degrees of freedom. If you choose a slightly more fine-grained history for the "dead portion" of the evolution than for the "alive portion", or vice versa, the relevant part of \(D(\alpha,\beta)\) will cease to be a multiple of the identity matrix: the entries on the diagonal of \(D\) will be divided to smaller pieces in the "dead cat branch" of the matrix. Because you want your calculation to be independent of the precise level of coarse-graining or the number of degrees of freedom that you treat as the environment, even in the special case when some of the diagonal entries of \(D\) are exactly equal, you won't really be allowed to rotate the basis while preserving the consistency condition "robustly".

Conventional low-energy situations won't really allow you "qualitatively different choices of the collection of consistent histories" that wouldn't be just some "coarse-graining of quantum possibilities around some classical histories". However, the black hole complementarity actually represents a great example of non-uniqueness of the solution to the condition of consistency of histories. The infalling and outgoing observer are using qualitatively different consistent collections of history operators acting on the same (or overlapping) Hilbert space.

Finally, let me also mention that the consistency condition may seemingly allow you to choose "\(z\) up" and "\(x\) up" histories from the beginning of the article in the same collection. The Consistent Histories formalism simplifies dramatically if we only want to show this simple point. The evolution may be completely dropped, the history operators reduce to simple projection operators, and we essentially consider\[

D(x,z) = {\rm Tr} \zav{P_x \rho P_z}.

\] If you write \(\rho\) as a combination of the identity matrix and three Pauli matrices (or, equivalently, multiples of \(P_x,P_y,P_z\)), you will find out that the trace above vanishes as long as \(\rho\) contains no contribution from \(P_y\). So if the expectation value of \(J_y\) in the initial state vanishes, the off-diagonal elements will be zero. (The latter claim may also be easily seen by calculating \(D(x,z)-D(z,x)\) from the commutator \([P_z,P_x]\).)

However, such a collection of histories will fail to obey the logical condition I haven't mentioned yet:\[

\sum_\alpha C_\alpha = {\bf 1}.

\] This should be valid as an operator equation so it's stronger than \(\sum_\alpha D(\alpha,\alpha)=1\). So it's not allowed to consider "alternatives" that aren't really orthogonal to each other.

In practice, the equation \(D(\alpha,\beta)=0\) is never "quite accurate" so we always ask questions about alternative histories that are only approximately consistent although the accuracy quickly becomes sufficient for all practical and most of impractical purposes. That's a manifestation of the fact that classical physics – and classical reasoning in general – never kicks in quite exactly.

Let me mention that aside from the "weak decoherence" and "medium decoherence" conditions above (the medium one clearly implies the weak one), Gell-Mann and Hartle also discussed a "strong decoherence" condition which would imply both of the previous two but which is too strong and would kill almost all choices of "history collections" whenever the initial state is highly mixed. The condition said that one could express all products \(C_\alpha \rho\) as\[

C_\alpha \rho = R_\alpha \rho

\] where \(R_\alpha\) is a projection operator, a "record projection". So one wants to work with the "medium decoherent" histories.

Cosmic GDP drops 97% since peak star

Staunch Chicken Littles such as Alexander Ač love to talk about "peak oil", a hypothetical moment (and, in their opinion, a predictable and important moment) at which the global oil production reaches its global maximum.

But Phys.ORG has discussed an even more far-reaching peak of something, namely "peak star". The popular article is based on this paper:

You would surely think that the Universe must be a horrible place to live if the "cosmic GDP" is just 3% of the value in those "good old times". Well, you would surely be wrong. Most of us didn't even know that the star production is so slow relatively to the maximum.

The peak was reached about 3 billion years after the Big Bang and I assure you, the world is a much better place today. During the "peak star", there wasn't even any Sun and the Earth – a planet that biased environmentalists consider more important than billions of other planets in the Universe ;-) – didn't exist, either.

I find it rather likely that 3 billion years after the Big Bang, our visible Universe contained no intelligent life but I am sure that many folks who think that "intelligent life is an inevitable omnipresent trash that immediately erupts almost everywhere" will disagree. We don't really know the answer.

It's also being estimated that despite the infinite length of the future life of our Universe that will increasingly approach the empty de Sitter space (its form of energy, the cosmological constant, already makes up over 70% of the energy density in the Universe, so we're already "pretty close" to the empty de Sitter Universe of the asymptotic future), the total number of stars in the cosmic history book will only increase by 5% since this moment. (Well, more precisely, we are talking about the total mass of the stars rather than the number.)

So if you measure the total "amount of fun in the life" as the integral of the product of the number of stars and (i.e. over) time, \(\int \dd t\,N_{\rm stars}\), then about 95% of the fun in the Universe has already taken place and almost nothing is awaiting us! We could also commit collective suicide and we could at most lose 5% of the fun events in our history.

The only problem with this pessimistic, nihilist conclusion is that we know very well that the "total amount of fun happening annually in the Universe" is proportional neither to the number of stars nor to their total mass. As I have mentioned, most of us believe that the life in the Universe is much more fun today than it was during the "peak star" 11 billion years ago.

The star production was needed for our Solar System to be born but many other events were needed for us to be here and to have some fun, too. The latter events depend on the existence of the stars and they're inevitably delayed by a certain period of time. And the things that decide about the GDP or the fun in life today – when the existence of the Solar System may be taken for granted – may proceed at a much faster rate so that those 7.5 billion years of the Sun's remaining life may be enough for a lot of fun – fun that is "almost" infinitely larger than what we have already seen (think about the speculations about the "technological singularity" which may be inaccurate but they're right about the point that the progress or GDP may continue to grow).

This objection is uncontroversial and kind of amusing in the case of "peak star". However, my point is clearly more far-reaching ideologically. My point is that the "amount of fun happening on the Earth every year" is clearly not proportional to the crude oil production, either. It isn't proportional to the electricity that is consumed, it isn't proportional to the number of SUVs or solar panels or soybeans that are sold, it is proportional to nothing particular that may be associated with the life in a given era.

The fun in the life is a totally independent quantity which depends on many things and the relationship of the fun in the life to other quantities is indirect, indeterministic, and it is constantly changing. Moreover, the relevant quantities today, such as the economists' GDP, are changing (and mostly increasing) by several percent per year while the 97% drop in 11 billion years corresponds to a modest 0.00000003% decrease per year which is negligible.

We have mentioned some of the reasons why "peak oil", much like "peak star" – even if we could determine or predict when it exactly occurs, which we can't – has no implications for the things we really care about in the world.

And that's the memo.

But Phys.ORG has discussed an even more far-reaching peak of something, namely "peak star". The popular article is based on this paper:

A large H\(\alpha\) survey at \(z=2.23,\, 1.47,\, 0.84\, \&\, 0.40\): the \(11\,{\rm Gyr}\) evolution of star-forming galaxies from HiZELS (arXiv, published in Monthly Notices of the Royal Astronomical Society)If we denote the number of produced stars per year as the "cosmic GDP", the years at which the cosmic GDP were maximized belongs to the distant memories. Since that time, the star production slowed down considerably. In fact, the "cosmic GDP" has decreased by a whopping 97% since that moment!

You would surely think that the Universe must be a horrible place to live if the "cosmic GDP" is just 3% of the value in those "good old times". Well, you would surely be wrong. Most of us didn't even know that the star production is so slow relatively to the maximum.

The peak was reached about 3 billion years after the Big Bang and I assure you, the world is a much better place today. During the "peak star", there wasn't even any Sun and the Earth – a planet that biased environmentalists consider more important than billions of other planets in the Universe ;-) – didn't exist, either.

I find it rather likely that 3 billion years after the Big Bang, our visible Universe contained no intelligent life but I am sure that many folks who think that "intelligent life is an inevitable omnipresent trash that immediately erupts almost everywhere" will disagree. We don't really know the answer.

It's also being estimated that despite the infinite length of the future life of our Universe that will increasingly approach the empty de Sitter space (its form of energy, the cosmological constant, already makes up over 70% of the energy density in the Universe, so we're already "pretty close" to the empty de Sitter Universe of the asymptotic future), the total number of stars in the cosmic history book will only increase by 5% since this moment. (Well, more precisely, we are talking about the total mass of the stars rather than the number.)

So if you measure the total "amount of fun in the life" as the integral of the product of the number of stars and (i.e. over) time, \(\int \dd t\,N_{\rm stars}\), then about 95% of the fun in the Universe has already taken place and almost nothing is awaiting us! We could also commit collective suicide and we could at most lose 5% of the fun events in our history.

The only problem with this pessimistic, nihilist conclusion is that we know very well that the "total amount of fun happening annually in the Universe" is proportional neither to the number of stars nor to their total mass. As I have mentioned, most of us believe that the life in the Universe is much more fun today than it was during the "peak star" 11 billion years ago.

The star production was needed for our Solar System to be born but many other events were needed for us to be here and to have some fun, too. The latter events depend on the existence of the stars and they're inevitably delayed by a certain period of time. And the things that decide about the GDP or the fun in life today – when the existence of the Solar System may be taken for granted – may proceed at a much faster rate so that those 7.5 billion years of the Sun's remaining life may be enough for a lot of fun – fun that is "almost" infinitely larger than what we have already seen (think about the speculations about the "technological singularity" which may be inaccurate but they're right about the point that the progress or GDP may continue to grow).

This objection is uncontroversial and kind of amusing in the case of "peak star". However, my point is clearly more far-reaching ideologically. My point is that the "amount of fun happening on the Earth every year" is clearly not proportional to the crude oil production, either. It isn't proportional to the electricity that is consumed, it isn't proportional to the number of SUVs or solar panels or soybeans that are sold, it is proportional to nothing particular that may be associated with the life in a given era.

The fun in the life is a totally independent quantity which depends on many things and the relationship of the fun in the life to other quantities is indirect, indeterministic, and it is constantly changing. Moreover, the relevant quantities today, such as the economists' GDP, are changing (and mostly increasing) by several percent per year while the 97% drop in 11 billion years corresponds to a modest 0.00000003% decrease per year which is negligible.

We have mentioned some of the reasons why "peak oil", much like "peak star" – even if we could determine or predict when it exactly occurs, which we can't – has no implications for the things we really care about in the world.

And that's the memo.

Tuesday, November 6, 2012

RSS AMSU: 2012 seems to be 11th warmest on record

For almost 15 years, the climate alarmist bigots have been dreaming about another warm year that would dethrone 1998 as the warmest year on the satellite temperature record. It's totally clear by now that year 2012 won't become this divine signal of their holy global warming they have been desperately waiting and praying for. In fact, after the first 10 months, it doesn't seem to make it into the top ten.

For almost 15 years, the climate alarmist bigots have been dreaming about another warm year that would dethrone 1998 as the warmest year on the satellite temperature record. It's totally clear by now that year 2012 won't become this divine signal of their holy global warming they have been desperately waiting and praying for. In fact, after the first 10 months, it doesn't seem to make it into the top ten.The UAH AMSU satellite temperature product recently experienced some problems and had to upgrade to a new version. In this ranking texts, I usually refer to RSS AMSU which seems to be an advantage now.

The ranking of the years 1998-2011 according to RSS AMSU is:

{1998, 0.54871},

{2010, 0.47591},

{2005, 0.330003},

{2003, 0.32197},

{2002, 0.314142},

{2007, 0.258975},

{2001, 0.247164},

{2006, 0.229666},

{2009, 0.225882},

{2004, 0.202923},

{1995, 0.158027},

{2011, 0.147427},

{1999, 0.102792},

{1997, 0.102523},

{1987, 0.0982575},

{2000, 0.0915137},

{1991, 0.081},

{1990, 0.0751534},

{1988, 0.0669781},

{1983, 0.066137},

{2008, 0.0502459},

{1996, 0.0463962},

{1994, 0.0285479},

{1981, 0.0207808},

{1980, 0.0146995},

{1979, –0.0941425},

{1993, –0.117159},

{1989, –0.119378},

{1986, –0.138775},

{1982, –0.1734},

{1992, –0.179363},

{1984, –0.223995},

{1985, –0.260586}.

Sorry for the ludicrous excess precision; I didn't want to spend the time to round the numbers. The average temperature anomaly during the first 10 months of 2012 was +0.2018 °C. If this remained the score for the whole year, 2012 would be the 11th warmest year of the RSS AMSU record.

It's very likely that the November+December 2012 average anomaly will deviate from +0.2018 °C by less than 0.2 °C and because these two months have a 5 times smaller impact than the previous 10 months, I expect the anomaly to change by less than 0.04 °C from the current 0.2018 °C. It seems "very likely" to me.

So 2012 is "very unlikely" to drop to the rank 12th or lower. However, if the last two months of the year will be substantially warmer, 2011 can make it up to the 6th or 7th place, not higher.

BSP (CZ): I don't know. Frost destroys the sunshine...

As I mentioned a month ago, the widely expected 2012-2013 El Niño seems to be delayed, to say the least, and the ENSO neutral conditions continue even though in the latest weekly ENSO report, they're very close to the El Niño threshold again. Without a clear El Niño this winter season, it seems very likely to me that even 2013 will refuse to become the warmest year. That would extend the period without a new warmest year to a whopping 15 years.

Obama-Romney: TRF poll

Update: About 1/3 of U.S. TRF readers voted for Obama and 3/5 of them expected Obama to win. Only 1% of TRF readers voted for Obama but expected Romney to win. Among the non-TRF readers, Romney slightly won the popular vote but by the electoral votes, Obama safely defended his presidency. Dow Jones collapsed by more than two percent after the results.This poll is very simple and unsurprising.

I want the U.S. readers – which make up 1/2 of the TRF visitors – to report whom they voted for and whom they expect to win. Well, I only mean the U.S. readers who are not crying of being tired of Bronko Báma and Mitt Romney yet.

If you didn't actually physically vote but you have an opinion, please pretend that you did vote. Your vote counts here.

Let me ask Unamerican readers to stay silent because this choice isn't really our business, the business of Unamericans. ;-) I would like to know how politically symmetric or asymmetric the readership of TRF is.

Monday, November 5, 2012

Why subjective quantum mechanics allows objective science

Short answer: Because subjective knowledge (and ignorance) is and has always been compatible with objective science and quantum mechanics simply transmutes all of science to a novel treatment of fundamentally subjective knowledge.

I've had an exchange about the subjective/objective nature of the observation in quantum mechanics with Arnold Neumaier, a mathematician in Vienna.

In my answer, I clarified that what is sometimes called the "collapse of the wave function" is actually a subjective process – it's a change of someone's knowledge because he or she or it or they is/are learning about the value of an observable. This "collapse" is the change of the subjective probabilistic distributions which is also why it may occur "faster than light". The collapse "only occurs in your head".

This basic principle – which I consider absolutely essential for the right understanding of the basics of quantum mechanics – is too counterintuitive for most people and Arnold Neumaier isn't an exception. So he protested:

However, that doesn't mean that it is a necessary condition. Since the 1920s, physicists have known that it is neither a necessary condition nor the correct way to protect the world against contradictions that could result from a generic conglomerate of "subjective viewpoints". Many processes, especially the macroscopic ones, are predicted by quantum mechanics to proceed in a way that admits a classical description with an objective reality.

But what's at least equally important, many others don't. At the fundamental level, quantum mechanics authoritatively and indisputably states that there exists no objective reality that would explain all subjective viewpoints as its reflections. Arnold Neumaier asks what's the quantum mechanics' explanation for the absence of contradictions despite the non-existence of objective reality; but his question is phrased as a rhetorical one because he isn't really interested in the answer even though the answer is arguably the most important finding of the 20th century science.

Let me discuss a few manifestations of the subjective character of existence implied by the basic postulates of quantum mechanics and explain why it leads to no contradictions.

Wigner's friend: "collapse" isn't objective

Wigner's friend is a guy closed in a lab with Schrödinger's cat. The cat dies if and when some radioactive nucleus decays – its fate is decided by a quantum-style microscopic process that may only be predicted probabilistically. Wigner is outside the lab and may still describe the whole lab, including his friend, in terms of the linear superpositions of all, including those macroscopically distinct, states that follows from Schrödinger's equation.

The question is whether the fate of the cat was decided already when Wigner's friend looked at the cat, or only when Wigner himself looked at the whole lab including the cat and his friend.

Wigner's friend may be certain that he has already made a measurement so the fate of the cat was determined rather early. However, Wigner himself only learns about the fate when he does his own measurements, so the state of the cat is determined much later.

The answer to the question "When the fate of the cat became decided and ceased to be murky?" is therefore subjective. Note that with macroscopic processes that admit a classical description, you could claim that the state of the cat was decided "immediately" and this assumption won't drive you into contradictions. But if you considered smaller, more inherently quantum objects and processes, any assumption that the system already had some particular values of observables is enough for you to be driven to wrong predictions. It's very important that quantum mechanics only describes the state of the physical systems as a "murky probabilistic superposition" of different possibilities.

Let me repeat it differently: You are allowed to assume that the observed quantities have already been facts since the moment when the information carried by them got imprinted to the environment many times and "irreversibly" decohered. If you assume that the observation became a "fact" before it decohered, you will be driven to contradictions with experiments.

However, it's also important to notice that decoherence, while extremely fast as soon as it kicks in, is never perfect. The off-diagonal elements of the density matrix in a particular basis never go to zero exactly. (Well, they are zero in some basis because every Hermitian matrix may be diagonalized but in general, it will be a basis that mixes macroscopically distinct states to a comparable extent.)

Decoherence is one of the "irreversible" processes, much like the growth of entropy in thermodynamics. But the microscopic description of this irreversibility – whether we mean the statistical physics description of the increasing entropy, or the quantum mechanical description of the origin of decoherence – shows that the "impossibility to reverse things" is never absolute. In statistical physics, it's just unlikely that the entropy will go down; it is not impossible. Analogously, in quantum mechanics, the loss of the information about the relative phase of two complex probability amplitudes is a problem that may be "reverted" to some extent.

But once the increase of the entropy becomes macroscopic, the chances of returning the entropy to the original low value become exponentially tiny and negligible. We say that it's impossible. Similarly, the entanglement of the measured system with the environment quickly becomes so complex that we give up all the hopes to "disentangle" this entanglement.

There's no objective moment at which we may say that it has become impossible to reverse the processes. In practice, people will agree whether it's possible or not but in principle, one may imagine a more accurate extraterrestrial engineer who is capable of reversing processes we consider hopelessly irreversible.

Returning to Wigner's friend, there can't be any contradiction between Wigner and Wigner's friend because the question "When the fate of the cat became decided?" must be answered by an operational procedure and everyone understands that there's no "canonical" procedure to do so, so the result of any procedure will reflect the particular idiosyncrasies of the procedure. Wigner's friend may prepare records of the dead/alive cat taken well before Wigner returned to the lab. But Wigner may always disagree and say that these photographs have been in a linear superposition of macroscopically different states up to the moment when he returned to the lab.

There can't be any sharp contradiction because the question is an ill-defined question about philosophy, the kind of question you should avoid according to the "shut up and calculate" dictum. Moreover, no one really cares about "when the fate of the cat got decided". We may mathematically derive that if a nucleus decays, the engine will immediately kill the cat. But we don't know whether the nucleus was "objectively" in the decayed state or a linear superposition and we don't really care. What we really care about is what the fate is. Is the cat alive, or dead?

Concerning the latter question, there won't be any contradictions. The evolution in quantum mechanics \[

\ket{\text{dead cat}} \to \ket{\text{dead cat}}\otimes \ket{\text{sad Wigner's friend}} \otimes \ket{\text{sad Wigner}}

\] and similarly for "alive cat" and "happy men" guarantees correlations – using the most general quantum description, it guarantees entanglement – between the state of the cat, the state of the Wigner's friend's brain, and the state of Wigner's own brain. We may show – by a simple calculation in quantum mechanics establishing the evolution above (not by classical dogmas about the objective reality!) – that if the cat dies, it will make the "same" impact on Wigner and Wigner's friend if both of their brains are measured. If the cat survives, the measurement of the two men's brains will yield compatible results, too.

So the two men will agree whether or not the cat is alive if both of them perform the measurement. But men – and other physical systems – don't have to agree about the question whether a measurement has taken place. A measurement is a process by which you are gaining the information and whether you are gaining the information – or you want to gain it – is a subjective matter. So people may disagree about the moment.

In classical physics, we were allowed to assume that there existed an objective world that someone could in principle know in the full entirety and accuracy. Individual people's knowledge reflected this objective reality and the ignorance (and statistical tools used to describe the imperfect knowledge) were just reflections of the individuals' imperfection that could have been avoided in principle.

Quantum mechanics shows that our world doesn't work in this way, however. The probabilistic character of the values of any observables is a fundamental property of the laws of physics in our Universe. It is inevitable that the value of most observables we can measure is uncertain and "probabilistically mixed" even a femtosecond before these observables are measured. There is no agent, not even God, who would know the state of the observables a moment before they're measured. The very assumption that such a perfect being exists mathematically contradicts the fact that the operators don't commute with each other; physically, such an assumption will either lead to predictions that disagree with the experimentally measured correlations, or with locality as demanded by the special theory of relativity.

So the question "whether some observation has already become a fact and when" doesn't have any objective, canonical answer – even though many people using the same conventions and models may usually agree. But this agreement only reflects their shared taste and social conventions (i.e. the same values of "tiny probabilities" that they're already willing to identify with zero when they discuss irreversibility of various processes). It doesn't reflect any objective reality that would exist in principle.

Purity of the "right state" is a subjective question, too

People often try to imagine that many other questions have "objective answers", too. One important example is the question:

First of all, the subjective character of the answer directly follows from my previous point, namely the conclusion that "the moment when the measurement took place is a subjective matter". Imagine that Wigner and his friend study any physical system, for example the spin of an electron. Wigner and his friend agree that in the initial state, it is determined by a given density matrix \(\rho\). So it's mixed. But once Wigner's friend measures the spin with respect to the \(z\)-axis, he will find out it's either "up" or "down" and the state of the electron will inevitably become pure – for Wigner's friend. However, Wigner himself will continue to evolve the whole lab via Schrödinger's equation. That means that he will ignore any hypothetical "discontinuous change" associated with the spin measurement and his description will continue to build on a mixed state. The state will be mixed for Wigner but pure for Wigner's friend.